In the fast-evolving field of Machine Learning, Python has emerged as a lingua franca, favored for its simplicity, readability, and vast ecosystem of libraries. For beginners and seasoned practitioners alike, Python’s modules make machine learning more accessible and efficient. In this article, we delve into ten essential Python modules that are indispensable for anyone venturing into machine learning.

1. Scikit-learn

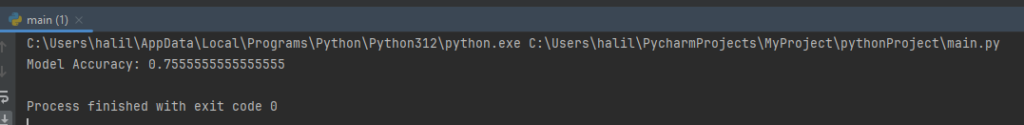

Scikit-learn is a comprehensive library that includes basic machine learning algorithms such as classification, regression, clustering, etc.

Example: Classification using the Iris dataset:

from sklearn import datasets

from sklearn.model_selection import train_test_split

from sklearn.ensemble import RandomForestClassifier

# Load the Iris dataset

iris = datasets.load_iris()

X = iris.data

y = iris.target

# Split into training and test data

X_train, X_test, y_train, y_test = train_test_split(X, y, test_size=0.3)

# Create and train the Random Forest classifier

clf = RandomForestClassifier(n_estimators=100)

clf.fit(X_train, y_train)

# Make predictions on the test data

predictions = clf.predict(X_test)

2. TensorFlow

Developed by Google, TensorFlow is preferred especially for deep learning projects.

Example: Creating a simple neural network:

import tensorflow as tf

# Create a simple model

model = tf.keras.models.Sequential([

tf.keras.layers.Dense(10, activation='relu'),

tf.keras.layers.Dense(10, activation='relu'),

tf.keras.layers.Dense(3, activation='softmax')

])

# Compile the model

model.compile(optimizer='adam',

loss='sparse_categorical_crossentropy',

metrics=['accuracy'])

# Train the model (on X_train, y_train data)

model.fit(X_train, y_train, epochs=10)3. Keras

Keras is a high-level neural network library that runs on backends like TensorFlow.

Example: Handwritten digit recognition using CNN:

from keras.datasets import mnist

from keras.models import Sequential

from keras.layers import Dense, Conv2D, Flatten

# Load the MNIST dataset

(X_train, y_train), (X_test, y_test) = mnist.load_data()

# Reshape the data

X_train = X_train.reshape(60000,28,28,1)

X_test = X_test.reshape(10000,28,28,1)

# Create a CNN model

model = Sequential()

model.add(Conv2D(64, kernel_size=3, activation='relu', input_shape=(28,28,1)))

model.add(Conv2D(32, kernel_size=3, activation='relu'))

model.add(Flatten())

model.add(Dense(10, activation='softmax'))

# Compile and train the model

model.compile(optimizer='adam', loss='categorical_crossentropy', metrics=['accuracy'])

model.fit(X_train, y_train, validation_data=(X_test, y_test), epochs=3)4. PyTorch

Known for its dynamic computation graph and ease of use, PyTorch is particularly popular in the research community.

Example: A simple linear regression model:

import torch

import torch.nn as nn

import torch.optim as optim

# Dataset (X and y values)

X = torch.tensor([[1.0], [2.0], [3.0]])

y = torch.tensor([[2.0], [4.0], [6.0]])

# Create a model

model = nn.Linear(1, 1)

# Loss function and optimizer

criterion = nn.MSELoss()

optimizer = optim.SGD(model.parameters(), lr=0.01)

# Training loop

for epoch in range(100):

optimizer.zero_grad()

outputs = model(X)

loss = criterion(outputs, y)

loss.backward()

optimizer.step()5. Pandas

Pandas is used for data analysis and manipulation, excellent for cleaning and analyzing large datasets.

Example: Reading a CSV file and basic data analysis:

import pandas as pd

# Read a CSV file

df = pd.read_csv('data.csv')

# Overview of the data

print(df.head())

print(df.describe())

# Fill missing data

df.fillna(0, inplace=True)6. NumPy

NumPy is a fundamental library for numerical computations.

Example: Array operations:

import numpy as np

# Create an array

arr = np.array([1, 2, 3, 4, 5])

# Double the elements of the array

arr = arr * 2

print(arr) # Output: [2 4 6 8 10]7. Matplotlib

Matplotlib is used for data visualization.

Example: Plotting a simple line graph:

import matplotlib.pyplot as plt

# Data

x = [1, 2, 3, 4, 5]

y = [2, 4, 6, 8, 10]

# Plot the line graph

plt.plot(x, y)

plt.xlabel('X Axis')

plt.ylabel('Y Axis')

plt.title('Simple Line Graph')

plt.show()8. SciPy

SciPy is used for scientific computations.

Example: Calculating an integral:

from scipy.integrate import quad

# Define a function

def integrand(x):

return x**2

# Compute a definite integral

result, error = quad(integrand, 0, 1)

print("Integral result:", result)9. NLTK (Natural Language Toolkit)

NLTK is used for natural language processing (NLP).

Example: Text tokenization:

import nltk

nltk.download('punkt')

from nltk.tokenize import word_tokenize

# A sample text

text = "Hello world. NLP with Python is fun!"

# Tokenize the text

tokens = word_tokenize(text)

print(tokens)10. OpenCV

Educated Super Arduino Starter Kit

ELEGOO UNO Project Super Starter Kit with Tutorial and UNO R3 Compatible with Arduino IDE

20% coupon $44.99 on AmazonOpenCV is used for image processing and computer vision projects.

Example: Loading and displaying an image:

import cv2

# Load an image

image = cv2.imread('image.jpg')

# Display the image

cv2.imshow('Image', image)

cv2.waitKey(0)

cv2.destroyAllWindows()Conclusion

Each of these Python librarys brings unique capabilities to the table, catering to various aspects of machine learning. Whether it’s data preprocessing, model building, visualization, or deep learning, these tools are designed to streamline the process and empower practitioners to develop sophisticated machine learning models. As the field grows, these modules continue to evolve, offering an ever-expanding toolbox for machine learning enthusiasts.